- Database-to-Database ETL

- Flat File ETL

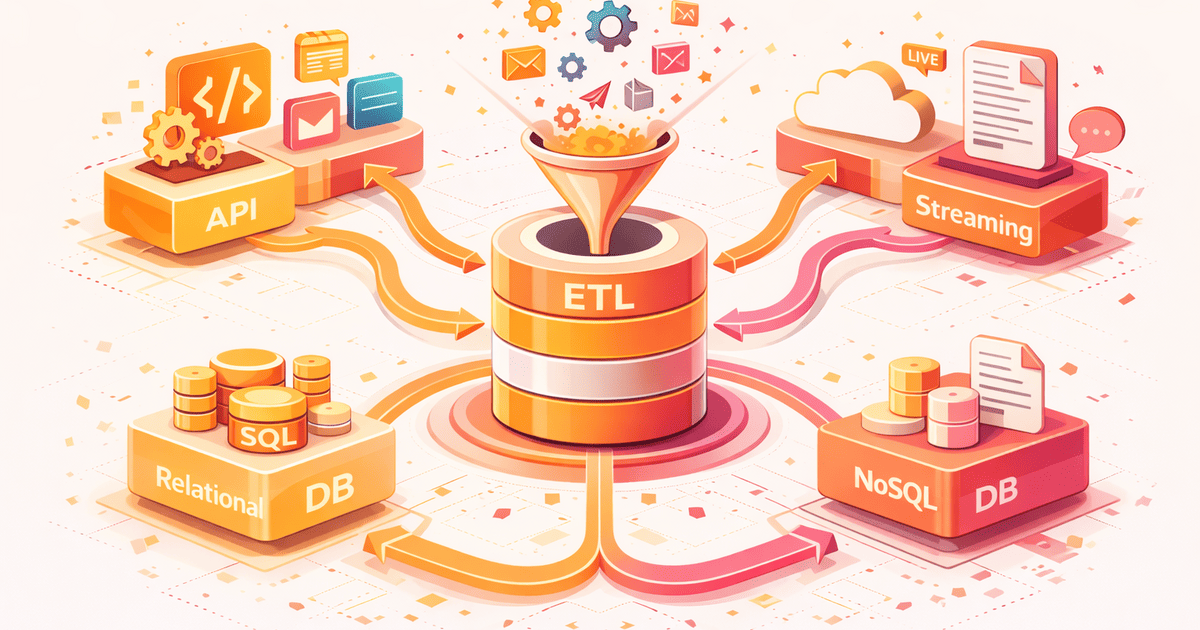

- API-Based ETL

- JSON, XML, and Document ETL

- Streaming and Event-Driven ETL

- NoSQL Database ETL

- Cloud Storage ETL

- Spreadsheet ETL

- Log Processing ETL

- FTP and Legacy System ETL

- OCR and Document Extraction ETL

- Web Scraping ETL

- Media ETL: Audio, Video, and Image

- How to Choose the Right Type

Not all types of ETL pipelines are the same. The term “ETL” often gets treated as a single concept, but the reality is that every data source requires a fundamentally different extraction strategy, transformation approach, and loading pattern. A pipeline that moves data from MySQL to PostgreSQL looks nothing like one that transcribes audio files into searchable text, or one that ingests real-time events from a message queue.

After years of building data integration systems, I have worked with most of these types of ETL pipelines. Each one taught me something different about how data moves through systems. This guide covers every major type, what makes each one unique, and the real-world challenges you will face with each approach.

If you are new to ETL concepts, start with Understanding ETL: The Philosophy Behind Data Pipelines first. If you already understand the basics, this guide will show you the full landscape of what types of ETL pipelines exist and when to use each one.

Database-to-Database ETL

This is the most common type of ETL pipeline. You are moving data from one relational database to another. MySQL to PostgreSQL. SQL Server to BigQuery. Oracle to Snowflake. The source and destination both understand SQL, but they speak slightly different dialects.

| Aspect | Details |

|---|---|

| Sources | MySQL, PostgreSQL, SQL Server, Oracle, MariaDB, SQLite |

| Extraction | SQL queries, change data capture (CDC), database replication logs |

| Key Challenge | Schema differences, data type mismatches, character encoding, large table pagination |

| Common Pattern | Full load for initial migration, incremental load for ongoing sync |

The under-the-hood challenge here is pagination. You cannot just run SELECT * FROM customers when the table has 50 million rows. You need cursor-based pagination or chunked queries using primary key ranges. The extraction phase must handle connection timeouts, lock contention, and the reality that the source database is still serving production traffic while you are reading from it.

Type conversion is another hidden complexity. MySQL’s TINYINT(1) might mean boolean, but PostgreSQL expects an actual BOOLEAN type. SQL Server’s DATETIME2 has different precision than PostgreSQL’s TIMESTAMP. These differences are invisible until they cause failures at 3 AM.

Flat File ETL

Flat file ETL processes data from CSV, TSV, fixed-width, and other delimited text files. Despite being one of the oldest data formats, flat files remain one of the most common data exchange methods in enterprise environments.

| Aspect | Details |

|---|---|

| Formats | CSV, TSV, fixed-width, pipe-delimited, custom delimiters |

| Extraction | File system read, stream parsing, line-by-line processing |

| Key Challenge | Encoding issues, inconsistent delimiters, quoted fields with embedded newlines, no schema enforcement |

| Common Pattern | Streaming iterator with memory-efficient line-by-line processing |

The real problem with flat files is that they have no schema enforcement. A CSV file does not tell you that column 3 should be a date. It does not tell you that column 7 should never be null. Every assumption you make about the data must be validated during the transform and clean phases. One malformed row with an extra comma can shift every column in every subsequent row.

Memory management is critical here. A 10 GB CSV file cannot be loaded into memory all at once. You need iterator patterns that process one row at a time while keeping memory constant. For more on this, see Memory-Efficient Data Processing.

API-Based ETL

API-based ETL pulls data from web services using REST, GraphQL, or SOAP protocols. This is the fastest-growing type of ETL pipeline because every modern SaaS product exposes an API. Stripe for payments. Salesforce for CRM. HubSpot for marketing. GitHub for development metrics.

| Aspect | Details |

|---|---|

| Protocols | REST, GraphQL, SOAP, gRPC |

| Extraction | HTTP requests with pagination, authentication tokens, rate limit handling |

| Key Challenge | Rate limiting, pagination strategies, authentication expiry, API versioning changes |

| Common Pattern | Paginated fetch with exponential backoff and retry logic |

The under-the-hood complexity with API ETL is rate limiting. Stripe allows 100 requests per second. Salesforce gives you a daily API call limit. If your pipeline does not respect these limits, the API will throttle you or block you entirely. You need retry logic with exponential backoff baked into your extraction phase.

Pagination is another challenge that varies by API. Some APIs use page numbers. Others use cursors. Some use link headers. Your extractor needs to handle all these patterns, and it needs to detect when an API silently changes its pagination behavior during a version update.

JSON, XML, and Document ETL

Document-format ETL handles nested, hierarchical data structures. Unlike flat CSV rows, JSON and XML documents can contain arrays, nested objects, and mixed-depth structures that do not map cleanly to relational tables.

| Aspect | Details |

|---|---|

| Formats | JSON, XML, YAML, TOML, MessagePack, Protocol Buffers |

| Extraction | File parsing, streaming parsers (SAX for XML, JSON streaming), path-based selection |

| Key Challenge | Nested structure flattening, array handling, schema evolution, large document memory usage |

| Common Pattern | Recursive flattening with path-based field mapping |

The core challenge is flattening. A JSON object like {"address": {"city": "London", "country": "UK"}} needs to become flat columns: address_city and address_country. But when you have arrays like {"orders": [...]}, you need to decide: do you create a separate table for orders, or do you denormalize them into the parent record?

XML adds its own complexity with namespaces, attributes versus elements, and the distinction between document-oriented and data-oriented XML. A SOAP response from a legacy banking system looks nothing like a clean REST JSON response, even when they carry the same information.

Streaming and Event-Driven ETL

Streaming ETL processes data in real-time as events arrive, rather than in scheduled batches. This type of ETL pipeline is fundamentally different from all others because there is no “extract all data” step. Data arrives continuously, and your pipeline must keep up.

| Aspect | Details |

|---|---|

| Sources | Apache Kafka, RabbitMQ, Amazon SQS, webhooks, WebSockets, server-sent events |

| Extraction | Consumer groups, topic subscriptions, webhook listeners |

| Key Challenge | Ordering guarantees, exactly-once processing, backpressure, consumer lag |

| Common Pattern | Consumer with offset tracking, dead letter queues for failures |

The fundamental difference is that batch ETL asks “give me everything since last time,” while streaming ETL says “process each event as it arrives.” This changes your entire architecture. You cannot retry a failed batch easily because new events keep coming. You need dead letter queues for failed events, offset tracking to know where you stopped, and backpressure handling for when events arrive faster than you can process them.

For observability patterns that work with streaming pipelines, see Event-Driven Observability.

NoSQL Database ETL

NoSQL ETL extracts data from document stores, key-value stores, wide-column databases, and graph databases. The challenge is that these systems store data in fundamentally different structures than relational databases.

| Aspect | Details |

|---|---|

| Sources | MongoDB, DynamoDB, Cassandra, Redis, Neo4j, CouchDB, Elasticsearch |

| Extraction | Collection scans, change streams, export utilities, query-based extraction |

| Key Challenge | Schema-less documents, inconsistent field types across documents, embedded vs referenced relationships |

| Common Pattern | Schema inference from sample documents, then validation against full dataset |

The biggest challenge with NoSQL ETL is that there is no guaranteed schema. In MongoDB, one customer document might have a phone field as a string, another might have it as an array of strings, and a third might not have it at all. Your pipeline must handle all these variations without crashing. Schema inference from a sample is not enough — you need to validate and adapt as you process the full dataset.

Cloud Storage ETL

Cloud storage ETL processes files stored in object storage services like Amazon S3, Azure Blob Storage, or Google Cloud Storage. These files often use columnar formats optimized for analytics workloads.

| Aspect | Details |

|---|---|

| Sources | Amazon S3, Azure Blob, Google Cloud Storage, MinIO |

| Formats | Parquet, Avro, ORC, Delta Lake, compressed CSV/JSON |

| Key Challenge | Partition discovery, schema evolution in columnar files, cost of listing/scanning large buckets |

| Common Pattern | Partition-aware reading with predicate pushdown for efficiency |

Columnar formats like Parquet and Avro are fundamentally different from row-based formats like CSV. Instead of storing data row by row, they store data column by column. This means reading just the customer_name column from a 100-column Parquet file is extremely fast because you skip the other 99 columns entirely. Your ETL pipeline needs to understand these formats to take advantage of predicate pushdown and column pruning.

Spreadsheet ETL

Spreadsheet ETL is surprisingly common in enterprise environments. Business teams export data to Excel, add manual annotations, and upload it back. Finance sends monthly reports as spreadsheets. HR sends employee data in XLSX format.

| Aspect | Details |

|---|---|

| Sources | Excel (.xlsx, .xls), Google Sheets, LibreOffice Calc, Numbers |

| Extraction | Library-based parsing, Google Sheets API, multi-sheet handling |

| Key Challenge | Multiple sheets, merged cells, formula dependencies, inconsistent formatting, manual data entry errors |

| Common Pattern | Header detection, data region identification, cell-by-cell type inference |

Spreadsheets are the most unpredictable data source. Users merge cells, leave blank rows as separators, mix data types in the same column, and use formulas that reference other sheets. Your pipeline needs to handle all of this gracefully. The extraction phase for spreadsheets is often the most complex because you are essentially parsing a visual layout, not a data structure.

Log Processing ETL

Log ETL transforms unstructured or semi-structured log files into queryable, structured data. Application logs, web server access logs, system logs, and audit trails all contain valuable data buried in text patterns.

| Aspect | Details |

|---|---|

| Sources | Application logs, nginx/Apache access logs, syslog, audit trails, CloudWatch, Stackdriver |

| Extraction | Regex pattern matching, log format parsers, structured logging (JSON lines) |

| Key Challenge | Multi-line log entries, inconsistent formats across versions, high volume, time zone handling |

| Common Pattern | Grok patterns or regex with streaming tail-based ingestion |

The under-the-hood challenge with log ETL is multi-line entries. A Java stack trace spans dozens of lines, but it is logically one log entry. Your parser needs to detect where one entry ends and the next begins. Structured logging formats like JSON Lines solve this problem by putting one complete JSON object per line, but legacy applications rarely use structured logging.

FTP and Legacy System ETL

FTP-based ETL is still alive and well in finance, healthcare, government, and manufacturing. Many enterprise partners exchange data by dropping files on FTP or SFTP servers on a daily schedule.

| Aspect | Details |

|---|---|

| Sources | FTP, SFTP, FTPS, mainframe exports, EDI (Electronic Data Interchange), legacy proprietary formats |

| Extraction | Scheduled file polling, file-based triggers, download-then-process |

| Key Challenge | File arrival timing, partial uploads, duplicate detection, fixed-width legacy formats |

| Common Pattern | Poll → download → verify checksum → process → archive |

The real-world challenge with FTP ETL is timing. How do you know when a file is fully uploaded? If you start reading a file that is still being written, you get partial data. Common solutions include watching for a companion “.done” flag file, checking file size stability over time, or requiring the sender to upload to a staging directory and then move it to the pickup directory atomically.

OCR and Document Extraction ETL

OCR (Optical Character Recognition) ETL extracts structured data from unstructured documents. Invoices become line items. Scanned contracts become searchable text. Handwritten forms become database records.

| Aspect | Details |

|---|---|

| Sources | PDF documents, scanned images, photographs of documents, fax outputs |

| Extraction | OCR engines (Tesseract, AWS Textract, Google Vision), layout analysis, table detection |

| Key Challenge | Recognition accuracy, layout preservation, handwriting variation, multi-language support |

| Common Pattern | Pre-process image → OCR → post-process text → validate against expected schema |

OCR ETL has a unique challenge that other types do not: confidence scoring. When the OCR engine reads a blurry invoice, it might recognize “1,000” or “1.000” or “l,000”. Your pipeline needs confidence thresholds. Records below the threshold get flagged for human review instead of being loaded automatically. This means your pipeline architecture must include a human-in-the-loop path alongside the automated path.

Web Scraping ETL

Web scraping ETL extracts data from websites that do not provide APIs. Price monitoring, competitor analysis, public data collection, and content aggregation all rely on scraping pipelines.

| Aspect | Details |

|---|---|

| Sources | HTML pages, JavaScript-rendered SPAs, public databases, government portals |

| Extraction | HTML parsing (DOM selectors, XPath), headless browsers, HTTP requests |

| Key Challenge | Website structure changes, anti-bot measures, JavaScript rendering, rate limiting, legal considerations |

| Common Pattern | Fetch HTML → parse selectors → validate extracted fields → handle structure changes gracefully |

The fundamental problem with scraping ETL is fragility. When a website changes its HTML structure, your selectors break. Unlike APIs which have versioning and deprecation notices, websites change without warning. Your pipeline needs robust error detection that alerts you when extraction yields unexpected results, rather than silently loading garbage data.

Media ETL: Audio, Video, and Image

Media ETL is the newest frontier. With AI and machine learning capabilities becoming accessible, pipelines that process audio, video, and image data are becoming practical for mainstream engineering teams.

| Media Type | ETL Use Case | Tools |

|---|---|---|

| Audio | Speech-to-text transcription, sentiment analysis, speaker identification | Whisper, AWS Transcribe, Google Speech-to-Text |

| Video | Frame extraction, subtitle generation, object detection, metadata extraction | FFmpeg, AWS Rekognition, OpenCV |

| Image | OCR, EXIF metadata extraction, classification, feature extraction for search | Tesseract, Pillow, AWS Rekognition, CLIP |

Media ETL pipelines share a common pattern: the raw media file goes through an AI model to produce structured data, and then that structured data flows through a traditional ETL pipeline. A 2-hour meeting recording becomes a transcript, the transcript becomes speaker-labeled segments, and those segments become searchable records in a database. The ETL part is the same — it is the extraction step that is fundamentally different.

For pipelines that prepare data for AI processing, see Building AI-Ready ETL Pipelines.

How to Choose the Right Type of ETL Pipeline

Choosing the right type of ETL pipeline depends on three factors: your data source, your latency requirements, and your data volume.

| If Your Source Is… | Use This Type | Key Consideration |

|---|---|---|

| Another relational database | Database-to-Database ETL | Use CDC for incremental loads over full extracts |

| Files from partners or exports | Flat File or FTP ETL | Validate file completeness before processing |

| SaaS platforms (Stripe, Salesforce) | API-Based ETL | Respect rate limits, handle pagination properly |

| Message queues or event streams | Streaming ETL | Design for exactly-once processing from the start |

| Physical documents or scans | OCR ETL | Build a human review path for low-confidence results |

| Websites without APIs | Web Scraping ETL | Monitor for structure changes, have fallback selectors |

| Audio, video, or images | Media ETL | Separate AI extraction from data transformation |

In practice, most production systems use multiple types of ETL pipelines. You might pull customer data from a database, payment data from Stripe’s API, and invoice scans from an OCR service — all feeding into the same target warehouse. The key insight is that while the extraction strategy differs, the transformation and loading patterns remain consistent. That is why the 6-phase pipeline pattern works regardless of source type.

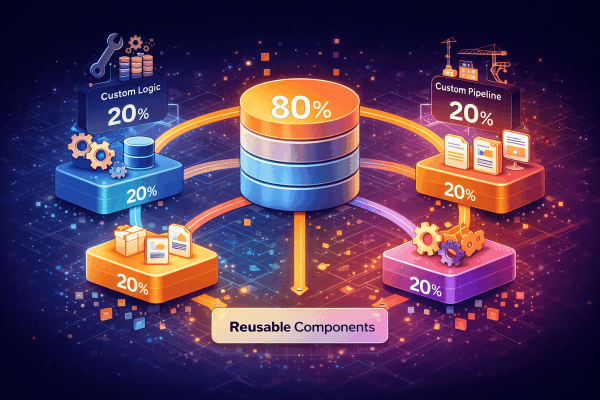

For a practical approach to building pipelines that handle multiple source types with maximum code reuse, see The 80/20 Framework Architecture.