- What is Configuration-Driven ETL?

- How Configuration Loading Works

- Why Environment Variables Matter for Security

- How Field Mapping Works Through Configuration

- Advanced Mappings: Beyond Simple Renames

- Mental Model: The Recipe Book

- How to Validate Configuration Before Processing

- Who Benefits From This Approach

- Common Anti-Patterns in Configuration Design

- Configuration Versioning and Change Management

- Key Takeaways

You have an ETL pipeline that maps “cust_name” to “customer_name”. Then marketing renames the source field to “customer_full_name”. Without configuration-driven ETL, you deploy new code, restart the pipeline, and hope nothing else breaks. With it, you update a JSON file: change “cust_name” to “customer_full_name”. No code deployment. No restart. The next pipeline run uses the new mapping automatically.

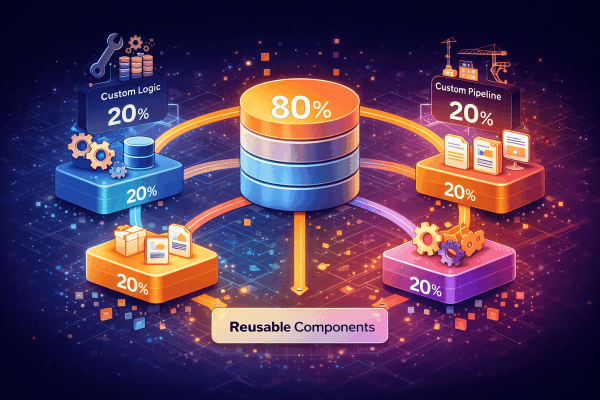

This is the power of separating declaration from logic. The code handles HOW to transform. The configuration handles WHAT to transform. Change one without touching the other. Configuration-driven ETL is not just a design preference. It is a fundamental architectural decision that determines how fast your team can respond to changes, how safely you can modify pipeline behavior, and how many people on your team can contribute to data integration work without being developers.

This builds on the architecture from The 6-Phase Pipeline Pattern. Where that guide focuses on the phases of transformation, this guide focuses on how configuration drives each phase: what to extract, how to map, which cleaners to apply, what validation rules to enforce, and where to load the results.

What is Configuration-Driven ETL and Why Does It Matter?

Configuration-driven ETL separates what a pipeline does from how it does it. The “what” lives in configuration files: field mappings, connection details, validation rules, cleaning options. The “how” lives in code: the framework that reads configuration and executes the operations. This separation means you can change pipeline behavior without writing or deploying code.

| Aspect | Code-Driven Pipeline | Configuration-Driven Pipeline |

|---|---|---|

| Adding a field mapping | Edit code, test, deploy | Edit JSON/YAML, next run picks it up |

| Changing a connection | Edit code or environment file | Update environment variable |

| Who can make changes | Only developers | Developers, analysts, operations |

| Risk of change | Code change can break anything | Config change is scoped to that pipeline |

| Review process | Full code review required | Config diff is easy to review |

| Rollback | Redeploy previous version | Revert config file in version control |

| Testing a change | Run full test suite | Run the specific pipeline with new config |

The practical difference is speed. In a code-driven pipeline, changing a field mapping requires a developer to modify source code, write tests, get a code review, merge, and deploy. In a configuration-driven pipeline, the same change is a one-line edit to a JSON file that can be reviewed and deployed in minutes.

I have seen teams spend days deploying what should have been a 5-minute configuration change, simply because the pipeline behavior was hardcoded. That is wasted engineering time on work that creates zero business value.

How Configuration Loading Works Under the Hood

Let us trace what happens when a pipeline reads its configuration. The loading process has three stages: read, merge, and validate. Each stage catches a different class of problems.

Stage 1: Read the pipeline configuration file

{

"name": "customer-sync",

"extractor": {

"class": "DatabaseExtractor",

"connection": "legacy_crm",

"query": "SELECT * FROM customers WHERE updated_at > :last_run"

},

"transformers": [

{

"class": "MappingTransformer",

"mappings": { "cust_name": "customer_name", "cust_email": "email" }

},

{

"class": "CleaningTransformer",

"cleaners": { "email": "email_cleaner", "phone": "phone_cleaner" }

}

],

"loader": {

"class": "DatabaseLoader",

"connection": "main_db",

"table": "customers",

"batch_size": 1000

}

}This file declares the complete pipeline behavior: where to read data, how to transform it, and where to write it. The framework does not care about the specific field names or connection details. It reads the configuration and executes accordingly.

Stage 2: Merge configuration from multiple layers

Configuration rarely comes from a single source. A well-designed system merges values from multiple layers, with later layers overriding earlier ones:

| Layer | Priority | What It Contains | Example |

|---|---|---|---|

| Framework defaults | Lowest | Sensible defaults for all pipelines | batch_size: 1000, retry_count: 3, timeout: 300 |

| Environment variables | Medium | Environment-specific values, secrets | DB_HOST=db.internal.company.com, DB_PASS=secret |

| Pipeline config file | High | Pipeline-specific declarations | name, mappings, cleaners, query |

| Runtime overrides | Highest | Execution-time adjustments | batch_size: 500 (for debugging a slow run) |

Framework default: batch_size = 1000

Pipeline config: (not specified, uses default)

Runtime override: batch_size = 500

Final value: batch_size = 500 (highest priority wins)

Framework default: timeout = 300

Pipeline config: timeout = 600

Runtime override: (not specified)

Final value: timeout = 600 (pipeline config overrides default)This layered approach means you set sensible defaults once, customize per pipeline where needed, and override at runtime for special cases. Most pipelines use mostly defaults, with just a few customized values.

Stage 3: Validate before processing any data

Validation checks:

✓ Pipeline name is present and not empty

✓ At least one extractor defined

✓ At least one loader defined

✓ All referenced connections exist in connection pool

✓ Connection host is not empty

✓ Credentials are available (from environment variables)

✓ Mapping source fields match expected source schema

✓ Cleaner names reference registered cleaner classes

All checks passed → Pipeline can run

OR

✗ Connection "legacy_crm" references undefined host

→ ConfigurationException: "Connection legacy_crm: host is required"

→ Pipeline STOPS before touching any dataValidation catches configuration problems before any data is processed. A missing connection string, a misspelled cleaner name, or an invalid batch size gets caught immediately. This is cheaper than discovering the problem halfway through processing 500,000 records.

Why Environment Variables Matter for Security

Credentials must never live in configuration files. Configuration files get committed to version control. Version control history is permanent. Even if you delete the password later, it exists in the commit history forever.

JSON config (safe to commit to Git):

{

"connection": {

"host": "${LEGACY_CRM_HOST}",

"username": "${LEGACY_CRM_USER}",

"password": "${LEGACY_CRM_PASS}"

}

}

Environment variables (never in Git):

LEGACY_CRM_HOST=db.internal.company.com

LEGACY_CRM_USER=etl_reader

LEGACY_CRM_PASS=super-secret-passwordThe ${VARIABLE} syntax tells the configuration loader to substitute environment values at runtime. The JSON file is safe to commit because it contains no secrets. Credentials live in the environment, managed by your deployment system, secret manager, or CI/CD pipeline.

Same code, same config file, different environments:

| Environment | DB_HOST | DB_USER | Config File |

|---|---|---|---|

| Development | localhost | dev_user | Same pipelines/customer-sync.json |

| Staging | staging-db.company.com | staging_etl | Same pipelines/customer-sync.json |

| Production | db.internal.company.com | etl_reader | Same pipelines/customer-sync.json |

No code changes between environments. No separate config files for dev vs staging vs prod. The only difference is which environment variables are set. This follows the Twelve-Factor App principle of storing config in the environment.

How Field Mapping Works Through Configuration

Let us trace how configuration drives the mapping phase. This is the most common operation in any ETL pipeline: renaming fields from the source schema to the destination schema.

Configuration:

{

"mappings": {

"cust_id": "customer_id",

"cust_name": "full_name",

"cust_email": "email"

}

}

Input record from source:

{

"cust_id": 12345,

"cust_name": "John Smith",

"cust_email": "john@example.com"

}

Step-by-step execution:

Read mapping rule: "cust_id" → "customer_id"

→ Copy value 12345 to new key "customer_id"

Read mapping rule: "cust_name" → "full_name"

→ Copy value "John Smith" to new key "full_name"

Read mapping rule: "cust_email" → "email"

→ Copy value "john@example.com" to new key "email"

Output record:

{

"customer_id": 12345,

"full_name": "John Smith",

"email": "john@example.com"

}The code does not know or care about field names. It reads the mapping from configuration and applies it. When marketing renames the source field from “cust_name” to “customer_full_name”, you change one line in the JSON file. The framework processes the new mapping on the next run without any code change.

Advanced Mappings: Beyond Simple Renames

Simple field renames cover most cases, but production pipelines need more. Configuration can express complex transformations: concatenating fields, conditional logic, lookups against reference tables, and computed values.

| Mapping Type | Use Case | Configuration Example | Result |

|---|---|---|---|

| Simple rename | Source and destination use different names | "cust_name" → "customer_name" | Copies value, changes key |

| Concatenation | Combine multiple fields into one | "${street}, ${city}, ${state}" | Merged address string |

| Conditional | Map values based on conditions | when active=1 then "active" | Transformed status value |

| Lookup | Replace codes with descriptions | countries.where(code="US").name | “United States” |

| Computed | Calculate a value from other fields | "${quantity} * ${unit_price}" | Calculated total |

Let us trace a concatenation mapping:

Configuration:

{

"target": "full_address",

"expression": "${street}, ${city}, ${state} ${zip}"

}

Input: { "street": "123 Main St", "city": "Austin", "state": "TX", "zip": "78701" }

Step 1: Find ${street} → "123 Main St"

Step 2: Find ${city} → "Austin"

Step 3: Find ${state} → "TX"

Step 4: Find ${zip} → "78701"

Step 5: Substitute all placeholders

Output: { "full_address": "123 Main St, Austin, TX 78701" }Each of these transformations is a data change, not a code change. Marketing wants “United States” instead of “US”? Update the lookup table. The address format needs to include the country? Add ${country} to the expression. No deployment needed.

Mental Model: The Recipe Book

Think of the difference between a chef and a cookbook. The chef knows HOW to cook: chopping, sauteing, baking, plating. These skills are the same regardless of what dish is being made. The cookbook knows WHAT to cook: ingredients, proportions, temperatures, timing. Different pages make different dishes.

| Kitchen Concept | ETL Equivalent | Changes How Often |

|---|---|---|

| The chef’s skills | Framework code (mapping engine, cleaning engine, loading engine) | Rarely. Core skills are stable. |

| The recipe | Pipeline configuration (field mappings, cleaners, connections) | Frequently. New dishes every week. |

| The kitchen | Infrastructure (servers, databases, network) | Occasionally. Renovations happen. |

| The ingredients | Source data (records from external systems) | Constantly. New data every run. |

When you want a new dish, you do not retrain the chef. You give them a new recipe. When you want a new field mapping, you do not rewrite the pipeline code. You update the configuration. The chef’s skills are stable and well-tested. The recipes change frequently based on what is being served today.

This analogy also explains why configuration validation matters. A recipe that says “bake at 10,000 degrees” is clearly wrong. The chef catches it before turning on the oven. Your configuration validator catches “batch_size: -1” before the pipeline starts processing data.

How to Validate Configuration Before Processing Data

Configuration validation is the most underrated part of configuration-driven ETL. Every production failure I have debugged that traced back to bad configuration could have been caught with proper validation. The principle is simple: validate everything before processing anything.

| Validation Type | What It Checks | Example Failure |

|---|---|---|

| Structural | Required fields are present, types are correct | Missing “name” field, batch_size is a string instead of integer |

| Referential | Referenced objects exist | Connection “legacy_crm” is not defined in connections config |

| Logical | Values make sense together | batch_size is 0, retry_count is negative, timeout exceeds 24 hours |

| Environmental | Required environment variables are set | ${DB_PASS} is referenced but not set in the environment |

| Connectivity | Referenced systems are reachable | Database host resolves but connection is refused |

Validating pipeline: customer-sync

Structural validation:

✓ "name" field present: "customer-sync"

✓ "extractor" section present with required fields

✓ "loader" section present with required fields

✓ "batch_size" is a positive integer: 1000

Referential validation:

✓ Connection "legacy_crm" exists in connections config

✓ Connection "main_db" exists in connections config

✓ Cleaner "email_cleaner" is a registered cleaner class

✓ Cleaner "phone_cleaner" is a registered cleaner class

Environmental validation:

✓ ${LEGACY_CRM_HOST} is set: "db.internal.company.com"

✓ ${LEGACY_CRM_USER} is set: "etl_reader"

✓ ${LEGACY_CRM_PASS} is set: (masked)

Connectivity validation:

✓ legacy_crm: Connection successful (47ms)

✓ main_db: Connection successful (12ms)

Result: All 12 checks passed. Pipeline is ready to run.Run validation at two points: when the configuration is first loaded (catches structural and referential errors) and immediately before execution (catches environmental and connectivity issues). The first catches bad config files. The second catches infrastructure problems that appeared since the config was last validated.

Who Benefits From Configuration-Driven ETL

The separation of code and configuration unlocks collaboration across roles. People who understand the data can modify pipeline behavior without understanding the code that executes it.

| Role | What They Can Do | Without Needing To |

|---|---|---|

| Data analysts | Adjust field mappings, add cleaning rules, modify validation thresholds | Write or understand framework code |

| Operations | See exactly what a pipeline does by reading configuration | Understand code to understand behavior |

| Developers | Focus on framework capabilities, not specific field mappings | Know every pipeline’s business logic |

| QA / Testing | Review config diffs to understand what changed | Read code diffs with complex logic changes |

| Management | Audit pipeline behavior through readable config files | Trust verbal descriptions of what pipelines do |

The biggest benefit is speed. When a source system changes a field name, the fix is a one-line config change that can be reviewed and deployed in minutes. Compare that to a code change that requires a developer, code review, testing, and deployment pipeline.

Common Anti-Patterns in Configuration Design

Configuration-driven ETL introduces its own class of problems. These anti-patterns undermine the benefits of separating code from configuration.

| Anti-Pattern | Why It Seems Reasonable | Why It Fails | What to Do Instead |

|---|---|---|---|

| Putting logic in config | “We can express everything in JSON” | Configuration becomes a programming language without tooling, debugging, or type safety | Keep config declarative. If it needs if/else logic, it belongs in code. |

| No config validation | “The pipeline will catch errors at runtime” | You discover a typo in a field name after processing 100K records | Validate all config before processing any data |

| Credentials in config files | “It is easier to have everything in one place” | Passwords committed to Git history permanently | Use environment variables for all secrets |

| One giant config file | “Easier to see everything together” | Merge conflicts, hard to review changes, impossible to find what changed | One config file per pipeline, shared connections config |

| No defaults | “Every pipeline should explicitly declare everything” | Config files are 200 lines long when 10 lines differ from defaults | Sensible defaults that pipelines can override |

| Unversioned config | “It is just a config file, not real code” | No history, no rollback, no audit trail | Commit config files to version control alongside code |

The most common anti-pattern I have seen is putting logic in configuration. It starts innocently: a conditional mapping, then a loop, then error handling. Before long, the JSON file is a poorly designed programming language. Configuration should be declarative, not procedural. It should describe WHAT you want, not HOW to achieve it.

A good test: if a non-developer cannot read and understand the configuration file, it has too much logic in it. Configuration should read like a specification, not like code.

Configuration Versioning and Change Management

Configuration files should be treated with the same discipline as code. They should be version-controlled, reviewed, and tested. The advantage is that configuration diffs are typically much simpler to review than code diffs.

commit abc123: "Update customer-sync mapping for new source field"

- "cust_name": "customer_name",

+ "customer_full_name": "customer_name",

This diff tells the entire story:

- The source field changed from "cust_name" to "customer_full_name"

- The destination field "customer_name" stays the same

- No code was changed

- No risk to framework behaviorCompare that to a code change for the same modification, which might touch multiple files, include test updates, and require understanding the framework internals to review.

| Practice | What It Provides | How to Implement |

|---|---|---|

| Version control | History of every change, who made it, when | Commit config files to Git alongside code |

| Change review | Another pair of eyes on config changes | Pull request for config changes, same as code |

| Automated testing | Validation before deployment | CI pipeline runs config validation on every commit |

| Rollback procedure | Quick recovery from bad changes | Revert the config commit, next run uses previous version |

| Change log | Business context for technical changes | Commit messages explain WHY the mapping changed |

One practice that has saved my team multiple times: include a “reason” or “changed_by” comment in configuration changes. When you are debugging a pipeline failure at 3 AM, knowing that “marketing renamed the field on January 15” is far more useful than a bare config diff.

Key Takeaways

Configuration-driven ETL is about making the right things easy to change and the wrong things hard to break. When done well, it transforms how your team works with data pipelines.

- Separate what from how: Configuration declares what the pipeline does. Code implements how it does it. Keep them apart.

- Layer your configuration: Framework defaults, environment variables, pipeline config, runtime overrides. Later layers win.

- Never commit credentials: Use environment variables for all secrets. Config files should be safe to commit to Git.

- Validate before processing: Catch configuration errors before touching any data. Structural, referential, environmental, connectivity.

- Keep configuration declarative: If it needs if/else logic, it belongs in code. Configuration should read like a specification.

- Version control everything: Config files, connection definitions, mapping rules. Treat them with the same discipline as code.

- Design for multiple roles: Analysts should be able to modify mappings. Operations should be able to read behavior. Developers should focus on capabilities.

- Use sensible defaults: Most pipelines should need only a few lines of configuration. Defaults handle the rest.

The goal is a system where changing pipeline behavior is a data change, not a code change. When someone on your team can update a field mapping, add a cleaning rule, or adjust a batch size without involving a developer, you have achieved the configuration-driven ideal.

For more on the principles behind configuration management, the Twelve-Factor App: Config methodology provides the foundational thinking. For schema validation of configuration files, JSON Schema is the standard approach for ensuring configuration structure is correct before runtime.