- What is the 80/20 ETL Framework Architecture?

- What Gets Reused Across Every Pipeline

- What is Actually Unique to Each Pipeline

- How Component Resolution Works

- Extending Without Modifying the Framework

- The Battle-Tested Component Library

- Mental Model: The Power Tool Workshop

- Why This Architecture Scales

- Common Anti-Patterns to Avoid

- Getting Started: Your First Framework Pipeline

- Key Takeaways

You start a new ETL project. You write code to stream data from a file. You write code to batch records for efficient database inserts. You write code to dispatch events for logging. You write code to handle errors gracefully. Two weeks later, you realize you have written this exact code before — on the last three projects. This is the problem that ETL framework architecture solves.

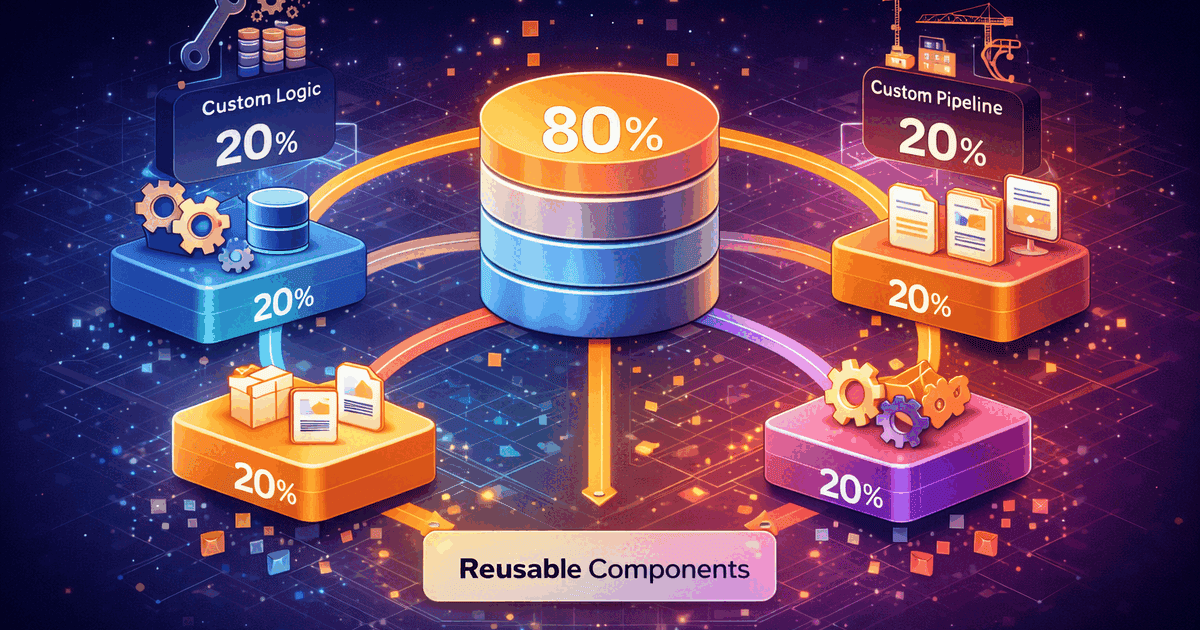

About 80% of every pipeline is infrastructure: streaming, batching, error handling, event dispatching, configuration management. Only 20% is unique: your specific field mappings, business rules, and domain logic. The 80/20 ETL framework architecture captures that common infrastructure so you write less code and reuse more. Instead of rebuilding the plumbing for every project, you build it once, test it thoroughly, and focus your time on the business logic that actually matters.

This is the capstone of the ETL Pipeline Series. It brings together everything: the 6-Phase Pipeline Pattern, production-tested cleaners, event-driven observability, configuration-driven design, and dependency management. Each of these patterns is a building block. The framework is the structure that holds them together.

What is the 80/20 ETL Framework Architecture?

The 80/20 principle applied to ETL means that most of the code you write for any pipeline is the same code you wrote for the last pipeline. The framework captures this repeated code — the infrastructure, the patterns, the edge case handling — and makes it reusable. You stop solving solved problems and focus on the 20% that makes each pipeline unique.

| Category | Percentage | What It Includes | Changes How Often |

|---|---|---|---|

| Framework (infrastructure) | 80% | Streaming engine, batch processor, event dispatcher, config parser, error handler, transaction manager, checkpoint system | Rarely. Stable after initial development. |

| Business Logic (your code) | 20% | Field mappings, validation rules, cleaning customizations, domain-specific transformations, business rules | Frequently. Changes with every new source or requirement. |

This is not a theoretical split. I have tracked it across multiple projects. The streaming code is identical. The batch insert code is identical. The event dispatching code is identical. The configuration loading code is identical. What changes is which fields map to which, what the validation rules are, and what domain-specific logic applies. That is the 20%.

What Gets Reused Across Every Pipeline: The 80%

Let us trace what code is identical in every ETL project. This is the infrastructure that the framework provides.

| Component | What It Does | Why It Is Always The Same | Series Reference |

|---|---|---|---|

| Data Streamer | Reads data one record at a time, constant memory | The mechanics of streaming do not change regardless of data source | Memory-Efficient Processing |

| Batch Processor | Groups records for efficient database inserts | Batch insert logic is the same for every table | Multi-Table ETL |

| Event Dispatcher | Broadcasts events to listeners with priority ordering | The observer pattern is universal across all pipelines | Event-Driven Observability |

| Config Loader | Reads, merges, and validates pipeline configuration | Loading JSON and substituting variables is always the same | Configuration-Driven ETL |

| Error Handler | Catches, logs, and recovers from processing errors | Try/catch patterns and retry logic are universal | 6-Phase Pattern |

| Checkpoint Manager | Records progress for recovery after failures | Checkpoint read/write logic does not change between pipelines | Multi-Table ETL |

| Data Cleaners | Phone, date, email, address, business ID cleaning | The same edge cases appear in every project | Data Cleaners |

All of this is framework code. It does not change between projects. It should be written once, tested thoroughly with edge cases from real production data, and reused forever. Every hour spent rewriting this code is an hour wasted.

What is Actually Unique to Each Pipeline: The 20%

Now let us look at what actually changes between projects. This is the 20% where your time creates real value.

| Unique Element | Example: CRM Sync | Example: ERP Import | Example: CSV Load |

|---|---|---|---|

| Field mappings | cust_id → customer_id | customer_number → customer_id | “Customer ID” → customer_id |

| Source connection | PostgreSQL on crm.internal | Oracle on erp.company.com | SFTP file drop at /data/daily/ |

| Business rules | Require email OR phone | Require valid account number | Require positive quantities |

| Domain validation | MC number format | Account number checksum | SKU pattern match |

| Cleaning overrides | Strip “EXT:” from phone | Convert Oracle dates | Handle Excel date serials |

This is the code that actually differs between projects. The field names change. The connection details change. The business rules change. But the infrastructure — how you stream records, how you batch inserts, how you dispatch events — stays the same. The framework handles the infrastructure. You handle the business logic.

How Component Resolution Works in the Framework

The framework uses namespace-priority loading. When a configuration file references a component like “MappingTransformer”, the resolver looks for your custom version first. If it does not exist, it falls back to the framework default. This means you get framework behavior for free and override only what you need.

Configuration requests "MappingTransformer"

Scenario 1: No custom version exists

Step 1: Look for App\ETL\MappingTransformer → Not found

Step 2: Look for Framework\Infrastructure\MappingTransformer → Found

Result: Use framework's MappingTransformer ✓

Scenario 2: You created a custom version

Step 1: Look for App\ETL\MappingTransformer → Found

Result: Use YOUR MappingTransformer ✓

(Framework version is ignored)

Scenario 3: You extend the framework version

Step 1: Look for App\ETL\MappingTransformer → Found

Result: Use YOUR version, which internally calls parent framework methods

You get: Framework behavior + your customizationsThe configuration does not change between scenarios. It still says “MappingTransformer.” The resolver finds the right implementation automatically based on namespace priority. This is the Open-Closed Principle in action: open for extension, closed for modification.

Extending Without Modifying the Framework

The most powerful aspect of this ETL framework architecture is extension without modification. You never edit framework code. You extend it. The framework’s battle-tested logic stays intact while you add your domain-specific behavior on top.

Framework cleaner (500+ lines of production-tested code):

class ContactDataCleaner {

cleanPhoneNumber($phone) {

// Handles 47 different phone formats

// International support (7-15 digits)

// Edge cases from millions of records

}

}

Your extension (10 lines):

class IndustryContactCleaner extends ContactDataCleaner {

cleanPhoneNumber($phone) {

// Remove industry-specific prefixes

$phone = removePrefix($phone, ['EXT:', 'FAX:', 'TEL:']);

// Delegate to framework for everything else

return parent::cleanPhoneNumber($phone);

}

}

Result:

You get: 500+ lines of battle-tested cleaning logic for FREE

You add: 10 lines of industry-specific preprocessing

Framework code: Untouched, still works for every other projectThis pattern works across every framework component. Need custom validation? Extend the validator. Need a different batch strategy? Extend the batch processor. Need industry-specific date handling? Extend the date cleaner. You always start with working, tested code and add only what is specific to your domain.

The Battle-Tested Component Library

The framework includes components extracted from years of production ETL work. These are not theoretical implementations. They handle edge cases that only appear after processing millions of records.

| Component | What It Handles | Edge Cases Covered |

|---|---|---|

| ContactDataCleaner | Phone, fax, email cleaning | 47 phone formats, “—” as phone, international 7-15 digits |

| AddressCleaner | Address sanitization and normalization | XSS prevention, PO Box detection, “NULL”/”N/A” removal |

| DateCleaner | Date parsing and normalization | MySQL 0000-00-00, multi-format fallback, timezone handling |

| BusinessDataCleaner | Industry identifiers | MC numbers, DOT numbers, EINs, SCAC codes |

| DataStream | Memory-efficient iteration | Files larger than memory, CSV/JSON/database sources |

| BatchProcessor | Efficient database loading | Configurable batch sizes, upsert support, transaction management |

| EventDispatcher | Observability infrastructure | Priority ordering, error isolation, propagation control |

| ConfigLoader | Layered configuration management | Environment variable substitution, validation, merging |

This is not theoretical code. The “NULL” literal string, the 0000-00-00 date, the phone number that is just dashes — these all come from real production data. When you use the framework, you get decades of edge case handling without spending the decades.

Mental Model: The Power Tool Workshop

Think of the difference between building furniture from raw lumber versus using a workshop full of power tools.

| Approach | What You Do | Time Per Project | ETL Equivalent |

|---|---|---|---|

| Raw lumber | Cut by hand, sand manually, carve joints | Weeks per piece | Write streaming, batching, events from scratch every project |

| Power tools | Table saw cuts, sander smooths, router makes joints | Days per piece | Use framework for infrastructure, write only business logic |

| What you focus on | Design, dimensions, finish — not how to build a saw | Creative work | Field mappings, business rules — not how to batch insert |

The framework is your power tool workshop. The tools are reliable, tested, and ready to use. You focus on what makes your project unique — the design, the business rules, the domain logic — not on rebuilding infrastructure that already exists.

And just like real power tools, the framework tools get better over time. Every edge case discovered in one project improves the tool for all future projects. A phone number format that broke one pipeline gets added to the cleaner, and every future pipeline handles it automatically.

Why This Architecture Scales Across Teams and Projects

The ETL framework architecture scales in three dimensions: across projects, across team members, and across time.

| Dimension | Without Framework | With Framework |

|---|---|---|

| New project | 2-3 weeks to build infrastructure before business logic starts | Hours to configure, then straight to business logic |

| New team member | Must understand the entire pipeline codebase | Must understand configuration + their 20% of business logic |

| Bug in infrastructure | Fixed in one project, still broken in others | Fixed once in framework, all projects benefit |

| New edge case | Each project discovers and handles it independently | Handled in framework, applied everywhere |

| Code review | Reviewing infrastructure + business logic together | Reviewing only the 20% business logic |

The compounding effect is significant. Project 1 builds the framework. Project 2 extends it. By Project 5, you have a battle-tested framework with edge case handling from five different data sources, five different domains, and five different failure modes. Each project makes the framework stronger for all future projects.

Common Anti-Patterns to Avoid

Framework architecture can go wrong. These anti-patterns undermine the benefits and create more problems than they solve.

| Anti-Pattern | Why It Seems Reasonable | Why It Fails | What to Do Instead |

|---|---|---|---|

| Premature abstraction | “Let us build the framework before writing any pipelines” | You abstract the wrong things without real-world usage to guide you | Extract the framework from working pipelines, not the other way around |

| God framework | “The framework should handle everything” | Overly complex, hard to extend, fights you instead of helping | Keep framework focused on the 80%. Let business logic stay in application code. |

| Modifying framework code | “It is faster to just change this one line in the framework” | Now your framework diverges from the canonical version, losing future updates | Always extend, never modify. Use namespace priority for overrides. |

| Config as code | “We can express everything in YAML” | Configuration becomes a programming language without proper tooling | Keep config declarative. Complex logic belongs in extendable code, not config. |

| Skipping tests | “The framework is stable, no need to test our extensions” | Your extensions might interact with framework code in unexpected ways | Test your business logic. The framework tests its own code. |

The most dangerous anti-pattern is premature abstraction. Building a framework before you have written at least two or three real pipelines means you are guessing at what should be abstracted. The result is a framework that abstracts the wrong things and forces awkward workarounds for the things that actually matter. Always extract a framework from working code, never design one in the abstract.

Getting Started: Your First Framework Pipeline

Building your first pipeline with the framework follows a simple pattern: configure, customize, run.

Step 1: Create configuration (the "what")

pipelines/customer-sync.json

{

"name": "customer-sync",

"extractor": { "class": "DatabaseExtractor", "connection": "legacy_crm" },

"mappings": { "cust_id": "customer_id", "cust_name": "full_name" },

"cleaners": { "phone": "phone_cleaner", "email": "email_cleaner" },

"loader": { "class": "DatabaseLoader", "connection": "main_db", "table": "customers" }

}

Step 2: Add business rules (the "unique 20%")

class CustomerValidator extends FrameworkValidator {

validate($record) {

if (empty($record['email']) && empty($record['phone'])) {

return reject("Customer must have email or phone");

}

return accept();

}

}

Step 3: Run

framework run customer-sync

The framework handles:

✓ Reading configuration and validating it

✓ Connecting to source and destination databases

✓ Streaming records one at a time (memory efficient)

✓ Applying field mappings from config

✓ Running your custom validator

✓ Cleaning phone and email with framework cleaners

✓ Batch inserting into destination (1,000 at a time)

✓ Dispatching events for logging and monitoring

✓ Recording checkpoints for recovery

✓ Handling errors without crashing the entire pipelineYou wrote a JSON config file and a 10-line validator. The framework handled everything else. That is the 80/20 split in practice: a few minutes of configuration and business logic, supported by thousands of lines of tested infrastructure.

Key Takeaways

The ETL framework architecture is about focusing your time where it creates the most value. Infrastructure is a solved problem. Business logic is where your expertise matters.

- 80% is infrastructure: Streaming, batching, events, config, error handling. Write it once, reuse forever.

- 20% is business logic: Field mappings, validation rules, domain-specific cleaning. This is where your time creates value.

- Extract, do not design: Build the framework from working pipelines, not from abstract architecture diagrams.

- Extend, never modify: Use namespace priority to override framework behavior without changing framework code.

- The framework gets stronger over time: Every edge case from every project improves it for all future projects.

- Configuration drives behavior: What the pipeline does lives in config. How it does it lives in the framework.

- New projects take hours, not weeks: Configure, add business rules, run. Infrastructure is already solved.

- New team members contribute faster: They only need to understand the 20% business logic, not the 80% infrastructure.

This is not about being lazy. It is about not solving solved problems. Your competitive advantage is in understanding your data, your domain, and your business rules. Not in yet another implementation of batch processing or event dispatching. Build on what works. Extend only what you need. Let the framework handle the rest.

For more on framework design principles, the Open-Closed Principle explains the architectural foundation that makes extensible frameworks possible. For practical patterns, Inversion of Control is the mechanism that allows framework code to call your business logic without knowing about it in advance.