Every ETL pipeline addresses the same fundamental challenge: data exists in one system, needs to exist in another system, and something must change along the way. That sounds simple. It is not. Behind that sentence sits decades of engineering complexity, failed 3 AM runs, and hard-won lessons about what actually works in production.

This is not a tutorial. This is how I think about ETL pipeline design after building data integration systems for years. The philosophy first. Then the mechanics. Then the patterns that emerge when you combine both. Every principle here comes from real production experience, and every recommendation links to a deeper exploration in this series.

What is an ETL Pipeline? Understanding the Core Problem

An ETL pipeline is a data integration process that extracts data from source systems, transforms it according to specific rules, and loads it into a destination system. The term stands for Extract, Transform, Load. But that clean three-word definition hides the real complexity underneath.

The core tension in data engineering stems from a reality that never changes: different systems optimize for different objectives. They are built for different jobs, and they cannot serve each other directly.

| System Type | Optimized For | Example |

|---|---|---|

| Source Databases | Write performance, transaction processing | PostgreSQL, MySQL |

| Analytics Warehouses | Read performance, query speed | BigQuery, Snowflake |

| APIs | Low latency, real-time responses | REST, GraphQL endpoints |

| Data Lakes | Storage capacity, schema flexibility | S3, Azure Data Lake |

Your source database is built to handle thousands of writes per second. Your analytics warehouse is built to scan millions of rows in a single query. These are fundamentally different machines with fundamentally different priorities. An ETL pipeline is the bridge between them. It translates between worlds that speak different languages and care about different things.

In my experience, the engineers who build reliable ETL pipelines are the ones who understand this tension deeply. They do not just move data. They understand why the systems are incompatible, and they design the translation layer with that understanding.

Why the Traditional Extract-Transform-Load Model Falls Short

Extract-Transform-Load sounds clean. Three phases. Three responsibilities. In practice, “Transform” becomes a dumping ground for everything that happens between extraction and loading. Type conversions live there. Business rules live there. Data cleaning lives there. Enrichment lives there. When your ETL pipeline breaks at 3 AM, you are digging through a monolith trying to figure out which of these completely different operations failed.

The insight that changed how I design pipelines: transformation is not one thing. It is several distinct operations that happen to occur between extraction and loading. Separating them is not about code organization. It is about understanding what actually happens to your data and being able to debug it when things go wrong.

| Phase | What It Does | How It Can Fail |

|---|---|---|

| Map | Changes structure without changing meaning. Field A becomes Field B. Nested becomes flat. | Schema mismatches, missing fields |

| Type Convert | Changes representation. String becomes integer. Timestamp becomes date. | Invalid formats, precision loss |

| Clean | Improves quality. Invalid values become null. Malformed data becomes well-formed. | Excessive cleaning means upstream problems |

| Enrich | Adds information. Lookups. Calculations. Derived fields. | Missing reference data, calculation errors |

Each of these can fail independently. Each has different failure modes. Each requires different debugging approaches. When they are bundled together under “Transform,” every problem is harder to diagnose.

When each phase is separate, you know exactly where a failure occurred. If the phone number is wrong, it is a cleaning problem. If the date format fails, it is a type conversion problem. No guessing.

I explore this pattern in full detail, including implementation code, in The 6-Phase Pipeline Pattern.

How Streaming Architecture Solves the Memory Problem

Most ETL pipeline code follows a pattern that works until it does not: load all data into memory, process it, write it out. The question is not whether your dataset will exceed available memory. The question is when.

Here is what happens under the hood with the naive approach:

data = extract_all_records() # 10M records loaded → Memory: 4GB

transformed = transform_all(data) # New copy created → Memory: 8GB

load_all(transformed) # Finally writes out → Memory crashes before thisThe solution is streaming: never hold the entire dataset. Process one record at a time. Chain operations together. Memory usage stays constant regardless of dataset size.

for record in extract_stream(): # One record at a time

mapped = map_record(record) # Transform in place

converted = convert_types(mapped)

cleaned = clean_record(converted)

enriched = enrich_record(cleaned)

load_record(enriched) # Write immediately

# Previous record released from memoryThis is not optimization. This is fundamental architecture. The difference between an ETL pipeline that handles 10,000 records and one that handles 10 million is not speed. It is whether the pipeline completes at all.

Think of it like a factory assembly line. Workers do not pile up all the parts on the floor, process them, then move everything at once. One part enters, gets processed at each station, and exits. The factory floor stays clear regardless of how many parts flow through.

I cover the implementation details, including iterator patterns and generator-based streaming, in Memory-Efficient Data Processing.

What Makes an ETL Pipeline Observable?

An ETL pipeline that runs silently is a pipeline you cannot trust. But the answer is not more logging. The answer is observability, and the two are not the same thing.

| Approach | What It Tells You | When It Helps |

|---|---|---|

| Logging | What happened after the fact | Post-mortem debugging |

| Observability | What is happening right now, and what normally happens | Detecting anomalies before they become failures |

Here is the scenario that made this click for me. Your pipeline processes 100,000 customer records every night. One morning it processes 50,000 records and reports “SUCCESS.” Logging says: task completed successfully. Observability says: volume anomaly detected, investigate source system.

The pattern I use in every production pipeline: emit events at every significant point. Pipeline started. Phase completed. Record failed validation. Batch processed. Let monitoring systems decide what matters. The ETL pipeline itself should not know what you care about tracking. It should report everything and let downstream systems filter.

Every production pipeline should track at minimum: records extracted, records transformed, records failed, records loaded, duration per phase, and quality metrics like validation failure rates. When these numbers change unexpectedly, something has changed upstream.

For the full implementation pattern including event-driven architecture, see Event-Driven Observability.

How to Design ETL Pipelines That Recover Gracefully

When your ETL pipeline fails halfway through processing 10 million records, what happens? This is not a theoretical question. It happens. The answer determines whether you lose hours or minutes.

| Recovery Strategy | How It Works | Pros | Cons |

|---|---|---|---|

| Start Over | Delete partial results, run from beginning | Simple, safe | Wasteful, time-consuming |

| Resume | Continue from the failure point | Efficient | Complex, requires state tracking |

| Idempotent | Rerun safely, get identical results | Simple AND efficient | Requires careful design upfront |

Idempotency means running an operation multiple times produces the same result as running it once. If your pipeline is idempotent, recovery is trivial: just run it again. No cleanup. No state tracking. No partial result corruption.

In practice, this means using upserts instead of inserts, so re-running updates existing records rather than creating duplicates. It means delete-then-insert for batch loads, so a rerun replaces the same date range cleanly. It means storing progress state in the destination database, not in the pipeline process, so the state survives restarts.

-- Non-idempotent: Running twice creates duplicates

INSERT INTO customers (id, name) VALUES (1, 'John');

-- Idempotent: Running twice produces same result

INSERT INTO customers (id, name) VALUES (1, 'John')

ON CONFLICT (id) DO UPDATE SET name = EXCLUDED.name;I have learned this the hard way. The teams that sleep well are the ones whose pipelines can be restarted at any point without anyone worrying about data corruption.

Why Configuration-Driven Design Changes Everything

Code tells you how. Configuration tells you what. When these two are mixed together, every change requires a developer. When they are separated, domain experts can modify pipeline behavior without touching implementation code.

The principle: anything that might change between environments or over time should be configuration. Connection strings. Field mappings. Validation rules. Batch sizes. The code that processes configuration should be stable. The configuration itself evolves.

source:

type: postgres

table: customers

mappings:

- source: customer_id

destination: id

type: integer

- source: full_name

destination: name

cleaner: trim_whitespace

- source: email_address

destination: email

validators:

- email_format

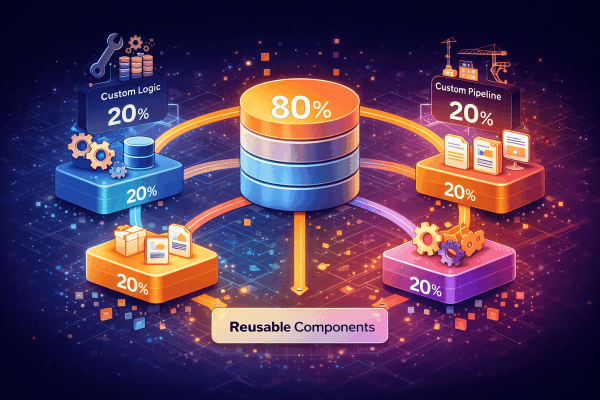

- not_nullThis connects directly to what I call the 80/20 insight. Every ETL pipeline project reinvents the same infrastructure: memory-efficient streaming, batch processing, transaction handling, event dispatching, error recovery. These are solved problems. Solving them again adds zero business value.

| Category | Percentage | What It Includes |

|---|---|---|

| Framework (stable) | 80% | Streaming engine, transaction management, error recovery, monitoring, configuration parsing |

| Business Logic (changes) | 20% | Your field mappings, your validation rules, your enrichment logic, your data quality standards |

Focus your time on the 20% that makes your pipeline unique. Let a framework handle the infrastructure. This is not about being lazy. It is about directing effort where it creates value.

I detail the configuration patterns in Configuration-Driven ETL and the framework architecture in The 80/20 Framework Architecture.

The Data Quality Reality Nobody Talks About

Source data is never clean. This is not a bug. It is a universal constant. I have never encountered a source system that sends perfectly formatted data. Systems store “NULL” as a literal string. Dates arrive as “0000-00-00”. Phone numbers are just dashes. Company names say “UNKNOWN” or “N/A”.

The question is not whether to clean data. The question is how explicitly you do it.

| Approach | What Happens | Why It Matters |

|---|---|---|

| Implicit Cleaning | Silently fixes bad data, no record of what changed | Problems are hidden. You cannot detect upstream changes. |

| Explicit Cleaning | Reports every change, tracks what was cleaned and why | Problems are visible. Sudden spikes tell you something changed upstream. |

Build cleaners that report what they changed. Track how much data fails each rule. Over time, this data tells you about your source systems. If phone number cleaning suddenly doubles from 5% to 10%, that is not a cleaning problem. That is a signal that something changed upstream, and you need to investigate.

For production-tested cleaning patterns with actual implementation code, see Production-Tested Data Cleaners.

How Table Dependencies Determine ETL Pipeline Success

Tables have relationships. Foreign keys enforce referential integrity. Loading order matters. If you load orders before customers, the database rejects everything because the foreign key references do not exist yet.

The solution is topological sorting: declare dependencies, let the system figure out the correct order automatically. When circular dependencies exist, the system should fail loudly. Circular dependencies are a design problem, not a loading problem.

For the full implementation including topological sort algorithms, see Multi-Table ETL Pipelines.

The Transaction Boundary Decision

Where you commit transactions in your ETL pipeline is a critical architectural decision. There is no universally correct answer. The choice depends on your specific consistency requirements, performance constraints, and failure tolerance.

| Strategy | Scope | Consistency | Performance | Best For |

|---|---|---|---|---|

| Entire Pipeline | All-or-nothing | Perfect | Slowest | Small datasets, critical accuracy |

| Per Batch | Every N records | Good | Balanced | Most production systems |

| Per Record | Each record | Immediate | Slowest | Real-time, low-latency needs |

Most production systems I have worked on use per-batch commits, typically 1,000 to 10,000 records, with checkpoint tracking. Good enough consistency. Good enough performance. Reasonable recovery. The “good enough” approach wins in production more often than the theoretically perfect approach.

Key Takeaways: The Philosophy of Boring Pipelines

ETL pipeline work is not glamorous. It is plumbing. But plumbing done wrong floods the building. Plumbing done right is invisible. The best ETL pipelines are boring.

- Separate concerns: Break “Transform” into distinct phases (map, convert, clean, enrich) so you can debug each independently

- Stream, do not batch: Process one record at a time for constant memory usage regardless of dataset size

- Design for observability: Emit comprehensive metrics and events so anomalies surface before they become outages

- Build idempotent operations: Design pipelines that produce identical results whether run once or ten times

- Configure, do not code: Separate business logic from infrastructure so domain experts can modify behavior

- Track data quality explicitly: Report every cleaning operation so you can detect upstream changes

- Respect dependencies: Declare table relationships and let topological sorting determine load order

- Focus on the 20%: Use frameworks for infrastructure, invest your time in domain-specific logic

Boring means reliable. Reliable means the pipeline runs at 3 AM, processes millions of records, fails gracefully when sources change, recovers without intervention, and produces the same results every time. Boring means you can forget it exists and focus on problems that actually need your attention.

I am building a framework that embeds these principles. It handles the 80% so you can focus on the 20% that makes your ETL pipeline unique. Because ETL infrastructure should be a solved problem, not a project.