- What Is AI Context Engineering?

- Why Stateless AI Is Costing You More Than You Think

- The Three Layers of Context That Actually Matter

- Under the Hood: How Context Injection Works

- Context Engineering vs Prompt Engineering

- Real-World Context Patterns That Work in Production

- Context Engineering Anti-Patterns to Avoid

- The Real Cost of AI Forgetting Everything

- How to Build a Context Management Layer

- Key Takeaways

AI context engineering is the discipline that will separate useful AI systems from frustrating ones. Every conversation you have with an AI assistant starts from zero. It does not remember your project. It does not know your coding style. It has no idea you explained your architecture three times this week already. This is not a minor inconvenience. It is a fundamental limitation that costs real money, wastes real time, and degrades the quality of every interaction.

I have spent the past two years building AI-powered development tools, and the single biggest insight I have gained is this: the quality of an AI interaction is determined less by the model and more by the context it receives. A smaller model with great context will outperform a larger model with no context, almost every time.

What Is AI Context Engineering?

AI context engineering is the practice of systematically managing, storing, retrieving, and injecting relevant information into AI interactions. It goes far beyond writing good prompts. It is about building infrastructure that ensures the AI has the right information at the right time, without the user having to provide it manually.

Context Engineering — This sounds like another buzzword, but it is really just this: making sure the AI knows what it needs to know before you ask it anything. Think of it like briefing a new team member before a meeting, except you have to do it every single time because the team member has amnesia.

The difference between a helpful AI assistant and a frustrating one almost always comes down to context. Not model size. Not temperature settings. Not prompt tricks. Context.

| Component | What It Does | Example |

|---|---|---|

| Context Store | Persists facts, preferences, and decisions across sessions | CLAUDE.md files, vector databases, memory stores |

| Relevance Engine | Determines which stored context matters for the current query | Semantic search, keyword matching, recency scoring |

| Injection Layer | Adds relevant context to prompts automatically | System prompts, RAG pipelines, context windows |

| Feedback Loop | Updates stored context based on new interactions | Preference learning, correction tracking |

Why Stateless AI Is Costing You More Than You Think

When you work with a human colleague, they remember your projects, your coding style, and your preferences. They know that when you say “the usual approach,” you mean a specific pattern your team has established. Language models have none of this. This is the core problem that AI context engineering aims to solve. Every session, you are re-explaining the same things.

I tracked this in my own workflow for a month. The results were sobering.

| Activity | Time Per Session | Sessions Per Week | Weekly Waste |

|---|---|---|---|

| Re-explaining project architecture | 3-5 minutes | 15+ | 45-75 minutes |

| Re-stating coding conventions | 1-2 minutes | 15+ | 15-30 minutes |

| Re-providing business context | 2-3 minutes | 10+ | 20-30 minutes |

| Correcting assumptions from lack of context | 3-5 minutes | 10+ | 30-50 minutes |

| Total weekly waste | 110-185 minutes |

That is roughly two to three hours per week spent on context that the AI should already have. Over a year, that adds up to over 100 hours. But time is only part of the cost.

Token economics are real. Every word you send costs tokens. Every word the AI sends back costs more tokens. When you are re-explaining context that the AI should already know, you are burning money on repetition. AI context engineering eliminates this waste by persisting and retrieving relevant information automatically. A typical context re-explanation uses 500-1,000 tokens. At current API prices, that is small per session but significant at scale. Teams running hundreds of AI interactions daily can spend thousands of dollars monthly on redundant context.

Conversation quality degrades. The AI cannot build on previous insights if it does not remember them. You are always starting from zero. The suggestions it gives are generic because it lacks the specific understanding that comes from knowing your system.

The Three Layers of Context That Actually Matter

Through building several AI-powered development tools, including Mini-CoderBrain and AgentMind (a private multi-agent research project), I have identified three distinct layers of context that determine how useful an AI interaction will be.

| Layer | What It Contains | Lifespan | Example |

|---|---|---|---|

| Session Context | Current conversation, immediate task, recent messages | Minutes to hours | “We are refactoring the auth module right now” |

| Project Context | Architecture, codebase structure, tech stack, decisions made | Weeks to months | “This project uses PostgreSQL with row-level security” |

| Personal Context | Preferences, patterns, historical interactions, style | Months to years | “I prefer explicit error handling over try-catch blocks” |

Session context is what most AI tools handle reasonably well. The conversation history stays in the context window. You ask a question, the AI remembers what you discussed two messages ago. This is the baseline, but effective AI context engineering goes much deeper.

Project context is where things get interesting. This is the knowledge that your codebase uses a specific framework, that you made a deliberate architectural decision to separate reads from writes, that the deployment pipeline requires certain conventions. Tools like CLAUDE.md files, cursor rules, and project-level configuration files are early attempts at solving this layer. They work surprisingly well for their simplicity.

Personal context is the hardest layer and the one almost no tool handles well. This includes your coding style preferences, your experience level with specific technologies, the patterns you have learned to avoid from past failures. When an AI knows that you spent three days debugging a race condition last month, it can proactively warn you about similar patterns.

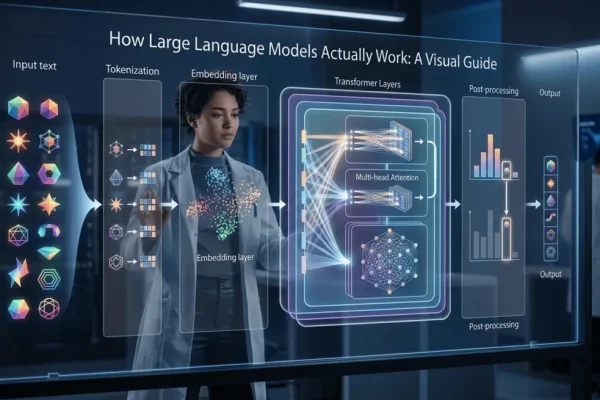

Under the Hood: How Context Injection Actually Works

Let me walk through exactly what happens when a well-designed context system processes your request. This is not theory. This is how systems like Claude Code, Cursor, and similar tools work under the hood.

Step 1: You type "refactor the auth middleware"

→ System captures your raw input

Step 2: Relevance engine activates

→ Searches project context for "auth" and "middleware"

→ Finds: auth module architecture, recent changes, coding conventions

→ Scores each piece by relevance (0.0 to 1.0)

Step 3: Context assembly

→ System prompt: 200 tokens (role, tone, rules)

→ Project context: 1,500 tokens (auth module details, patterns used)

→ Personal context: 300 tokens (your style preferences)

→ Session history: 800 tokens (recent conversation)

→ Your query: 50 tokens

→ Total injected: ~2,850 tokens before the model sees anything

Step 4: Model receives the assembled prompt

→ It "sees" a rich, contextual request

→ Response quality is dramatically higher than raw query alone

Step 5: Feedback captured

→ If you accept the suggestion → relevance scores reinforced

→ If you reject or modify → context updated for next timeThe key insight here is that the user typed 5 words, but the model received 2,850 tokens of context. That gap between what you type and what the model sees is where context engineering lives. The better that gap is filled, the better the AI performs.

How Does Context Engineering Differ from Prompt Engineering?

People often confuse these two disciplines. AI context engineering is frequently mistaken for prompt engineering, but they solve different problems. They are related but fundamentally different in scope and approach.

| Dimension | Prompt Engineering | Context Engineering |

|---|---|---|

| Focus | How you phrase the question | What information surrounds the question |

| Scope | Single interaction | Across sessions and projects |

| Persistence | Ephemeral (per message) | Persistent (stored and retrieved) |

| Automation | Manual (user writes prompts) | Automatic (system injects context) |

| Scaling | Does not scale (every prompt is manual) | Scales with better retrieval systems |

| Example | “Act as a senior engineer and review this code” | System auto-injects project conventions, recent changes, and known bugs |

Prompt engineering is a skill. Context engineering is infrastructure. You need both, but AI context engineering has a much higher ceiling because it compounds over time. A good context system gets better the more you use it.

What Real-World Context Patterns Work in Production?

After experimenting with several approaches, here are the AI context engineering patterns I have seen work in real systems.

Pattern 1: Project configuration files. This is the simplest and most effective pattern. Files like CLAUDE.md, .cursorrules, or .github/copilot-instructions.md that sit in your repository and get automatically loaded into the AI context. They contain your project conventions, architecture decisions, and coding standards. I use this pattern for every project now, and the improvement in AI output quality is immediate and significant.

Pattern 2: Retrieval-Augmented Generation (RAG). Store your knowledge base in a vector database. When a query comes in, retrieve the most relevant chunks using semantic search. Inject only those chunks into the prompt. This pattern powers most enterprise AI applications. The model is just the final step. The retrieval system does the heavy lifting.

Pattern 3: Hierarchical memory stores. Build a layered memory system with different persistence levels. Hot memory for the current session. Warm memory for the current project. Cold memory for long-term preferences and patterns. Each layer has different retrieval costs and relevance decay rates.

Pattern 4: Conversation summarization. Instead of keeping full conversation history (which consumes tokens fast), periodically summarize completed topics into compact context blocks. A 20-message conversation about database design becomes a 200-token summary that captures the key decisions. This preserves the insights while freeing up context window space.

| Pattern | Complexity | Effectiveness | Best For |

|---|---|---|---|

| Project config files | Low | High | Individual developers, small teams |

| RAG with vector search | Medium | High | Knowledge bases, documentation, enterprise |

| Hierarchical memory | High | Very high | AI agents, long-running assistants |

| Conversation summarization | Low | Medium | Chat applications, support bots |

What Are the Most Common Context Engineering Anti-Patterns?

I have made most of these mistakes myself. Learning what not to do in AI context engineering was as valuable as learning what works.

| Anti-Pattern | What Happens | Better Approach |

|---|---|---|

| Context dumping | Injecting everything you know into every prompt, overwhelming the model | Use relevance scoring to inject only what matters for this specific query |

| Stale context | Using outdated project information that contradicts current state | Version your context and include timestamps; invalidate on change |

| Context duplication | Same information appears multiple times in the prompt, wasting tokens | Deduplicate before injection; use references instead of repetition |

| Ignoring token budgets | Context exceeds window limits, causing truncation of important information | Set hard token budgets per context layer; prioritize by relevance |

| No feedback loop | Context quality never improves because the system does not learn from corrections | Track which context led to accepted vs rejected suggestions |

| Manual context only | Relying on users to provide context every time, which they forget or tire of | Automate context retrieval and injection; make it invisible |

The most common mistake I see is context dumping. Teams build a RAG system, retrieve twenty documents, and inject all of them into the prompt. The model gets confused because it cannot distinguish between highly relevant and marginally relevant information. This is a fundamental lesson in AI context engineering: less context, better selected, almost always beats more context poorly selected.

What Is the Real Cost of AI Forgetting Everything?

Let me put real numbers to this problem. These are based on my own usage patterns and current API pricing, so your numbers will vary. But the proportions hold, and they make the case for AI context engineering clear.

| Cost Category | Without Context Engineering | With Context Engineering | Savings |

|---|---|---|---|

| Tokens per session (context setup) | 800-1,500 tokens | 50-100 tokens | 90-95% |

| Time per session (re-explaining) | 5-10 minutes | 0-1 minutes | 80-100% |

| Correction iterations per task | 3-5 rounds | 1-2 rounds | 50-70% |

| Monthly API cost (heavy user) | $80-150 | $40-70 | 40-55% |

| Output quality (subjective) | Generic, often misaligned | Specific, contextually appropriate | Significant |

The biggest cost is not money. It is quality. Without proper context, the AI gives you technically correct but contextually wrong answers. It suggests patterns your team has decided against. It uses conventions that do not match your codebase. Every correction cycle wastes time and erodes trust in the tool.

How to Build a Context Management Layer

In my work on Mini-CoderBrain, I have been building a context management layer that sits between the user and the LLM. The AI context engineering architecture is simpler than you might expect.

┌─────────────────────────────────────────┐

│ User Input │

│ "refactor auth module" │

└──────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ Relevance Engine │

│ 1. Parse intent (refactor, auth) │

│ 2. Search project context → auth files │

│ 3. Search personal context → style prefs│

│ 4. Score and rank by relevance │

│ 5. Apply token budget (max 3000 tokens) │

└──────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ Context Assembly │

│ System prompt [200 tokens] │

│ Project context [1,500 tokens] │

│ Personal prefs [300 tokens] │

│ Session history [800 tokens] │

│ User query [50 tokens] │

│ ───────────────────────────── │

│ Total [2,850 tokens] │

└──────────────┬──────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ LLM API Call │

│ Model receives rich, contextual prompt │

│ Response quality: HIGH │

└─────────────────────────────────────────┘The key design principle is this: the best context system is one you never have to think about. It should be invisible. The user types a short query, and the system automatically assembles the right context behind the scenes. If the user has to manually manage context, your AI context engineering has failed.

Start simple. A CLAUDE.md file in your project root is a context system. It is not sophisticated, but it works. From there, you can add retrieval, memory persistence, and feedback loops as your needs grow. Do not over-engineer the first version of your AI context engineering system. The simplest context system that improves your workflow is better than the perfect system you never build.

Key Takeaways

- Context determines AI quality more than model choice: A smaller model with great context will outperform a larger model with no context. Invest in AI context engineering infrastructure before upgrading models.

- There are three layers of context: Session context (what is happening now), project context (the codebase and decisions), and personal context (your preferences and patterns). Most tools only handle the first layer.

- AI context engineering is infrastructure, not a one-time effort: Unlike prompt engineering, context engineering compounds over time. The system gets better the more you use it.

- Start with project configuration files: CLAUDE.md, .cursorrules, and similar files are the simplest and most effective context pattern. Use them now.

- Less context, better selected, beats more context poorly selected: Relevance scoring matters more than volume. Do not dump everything into the prompt.

- The biggest cost of stateless AI is quality, not money: Generic, contextually wrong answers waste more time than the tokens they consume.

- Automate context injection: If the user has to manually provide context every session, the system has failed. Good AI context engineering is invisible.